Beyond "Vibe-Coding": A Developer's Guide to the AI Transition

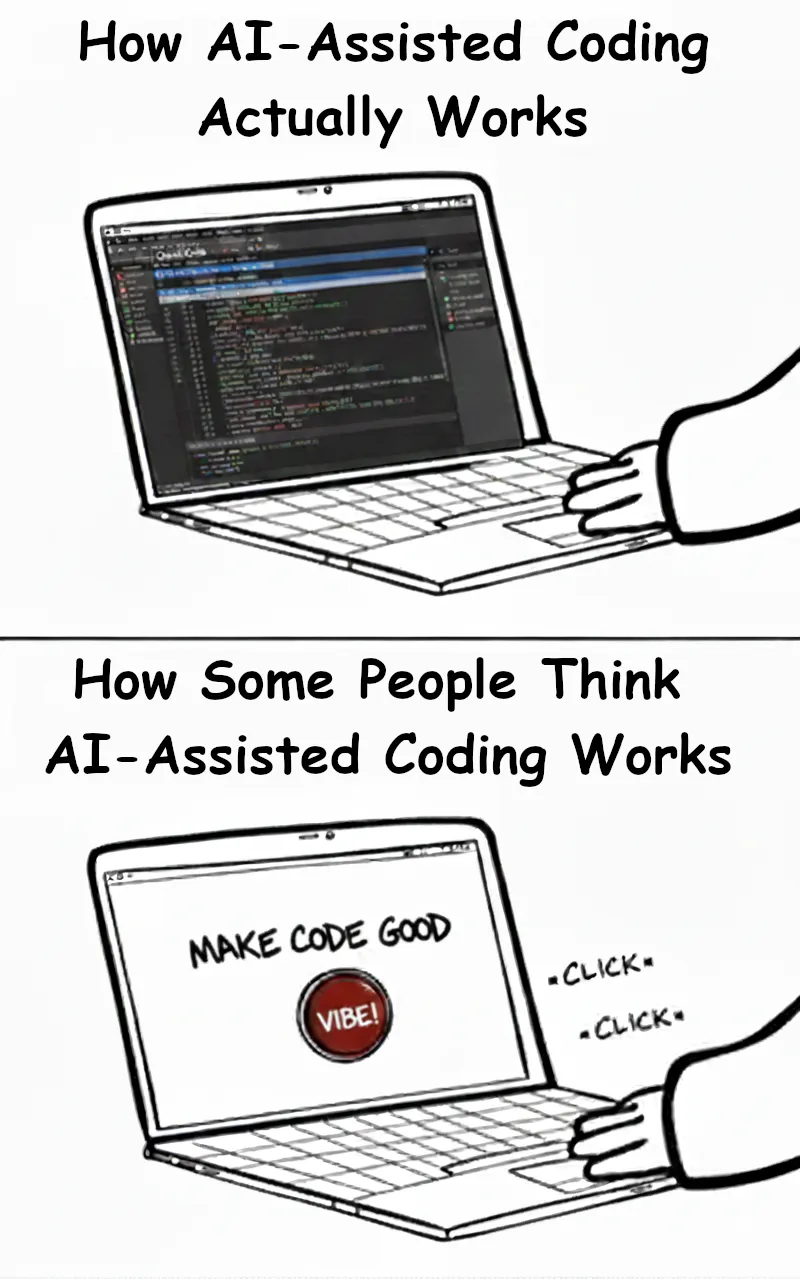

There’s a meme I came across several years ago that perfectly captures this moment in software development. It shows two panels: the top one depicts a laptop running a complex DAW with dozens of tracks, labeled “How electronic music is actually made.” The bottom panel shows a laptop with nothing but a giant red button labeled “Make Music” – captioned “How some people think electronic music is made.” I’ve created an adaptation of it as the cover image for this post.

That image keeps coming back to me as I watch the discourse around AI and coding. There’s a term that’s been everywhere lately – “vibe-coding.” Coined by Andrej Karpathy in early 2025, it suggests developers are just “vibing” their way through code, typing casual prompts while AI does all the actual work. When people hear it, they imagine a developer typing a simple prompt to build an entire app that does anything and everything they wish. Just press the button. Make software.

The term has caught on because it captures a real anxiety in our industry. Among developers, it carries pretty negative connotations – implying laziness, lack of real skill, maybe even professional negligence. There’s this underlying suggestion that developers using AI are taking shortcuts, that they don’t really understand what they’re building anymore. That they’re just… vibing.

Even before the “vibe-coding” term was coined, these connotations made me skeptical when I first started exploring AI development tools. Part of me worried I’d become dependent on AI to do my job for me.

After experimenting with AI tools and watching the industry evolve, I see things differently now. That “Make Music” button? It’s a near-perfect metaphor, but not in the way critics think. Electronic musicians know that behind that button lies a world of complexity – signal routing, effect chains, synthesis techniques. Similarly, effective AI development requires mastering context windows, prompt engineering, and collaborative debugging. The tools are powerful, but only if you know how to wield them.

This article is divided into three parts. First, I’ll share the practical skills I’ve picked up through hands-on experience with AI tools – the nuts and bolts of collaborating with these systems. Then I’ll look forward, exploring how these changes might transform our roles as developers and reshape entire teams. Some of this is based on early trends, some on conversations with other developers, and some on educated speculation.

I’ve come to believe that this isn’t about taking shortcuts or being replaced. It’s about developing an entirely new set of competencies – learning to orchestrate these systems with intention and care.

So what does that actually look like? Let’s start there.

Part 1: From Solo Coder to AI Pair Programming Lead

I made a commitment to lean into using AI tools effectively, and along the way I’ve identified a set of core skills that have fundamentally changed how I develop software. These aren’t about writing perfect prompts – they’re about understanding how to collaborate effectively with AI systems.

Understanding Your AI Partner’s Mind

Context windows as working memory

Context is everything the AI can “see” when generating a response – your current prompt, previous messages in the conversation, any code you’ve shared, documentation, everything. The context window is the limit on how much of this information the AI can process at once. Think of it like the AI’s RAM – there’s only so much it can hold at any given time.

It can be beneficial to work within these limits deliberately. When you understand that the AI can only consider a certain amount of information at once, you naturally start structuring your requests more effectively. It’s similar to working with limited memory – you need to be intentional about what information you include and when.

Token economics and practical efficiency

Tokens are the basic units AI models use to process text – think of them as chunks of words or parts of words (roughly 3-4 characters per token on average). Every prompt and response consumes tokens, and most AI services charge based on token usage. Different approaches to the same problem can have vastly different token costs.

Examples:

- Pasting minified code or lengthy JSON samples burns tokens without adding value

- Including only the function signatures of unchanged code saves tokens while maintaining context

- Breaking complex requests into steps often uses fewer total tokens than one massive prompt

- Comments and documentation in your code help AI understand context more efficiently (but keep them concise)

Developing an intuition for this is like learning to optimize for performance – but with different constraints.

The confidence calibration problem

AI tools sound equally confident whether they’re absolutely right or completely hallucinating. Watch for these red flags:

- Overly specific version numbers that don’t exist

- API methods or functions that sound plausible but don’t exist

- Solutions that seem suspiciously elegant

- Imports from packages that feel “almost right” but aren’t quite

Writing Prompts That Work

Scope your tasks precisely

Instead of lengthy explanations, be specific and focused: “Add error handling to the payment processor, focusing on network timeouts and invalid card formats.” The AI needs clear boundaries, not background stories.

Plan before you build

For complex changes, ask the AI to propose a plan before writing any code. A prompt like “Plan how you would implement user authentication for this app, then wait for my feedback” lets you catch structural issues before they’re baked into the code. This is especially valuable for multi-file changes or features that touch several parts of the system. You can align on approach, flag potential issues, and refine the plan together – which is much cheaper than refactoring after the fact.

Show, don’t tell

One clear example beats paragraphs of description. Rather than explaining a complex pattern, show what you want: “Format the output like this: {status: 'success', data: {...}, timestamp: '...'}.” The AI immediately understands the structure you’re looking for.

Use images when words fall short

Most modern AI tools can process images, and a screenshot is often worth a thousand tokens. Paste error messages, design mocks, architecture diagrams, or UI bugs directly into your prompt rather than trying to describe them. This is particularly useful for frontend work where you’re trying to match a design, or for debugging where the visual context matters. If you’re not including visuals and find yourself writing lengthy descriptions of what something looks like, that’s a sign to just show it instead.

Request structured outputs

When you need consistent results, explicitly ask for specific formats – JSON structures, markdown tables, whatever makes sense. It’s like defining an interface: once you set the structure, you can rely on it.

Use negative constraints effectively

Telling the AI what NOT to do often works better than trying to specify everything you want. “Don’t use any external libraries” or “Avoid modifying the existing tests” can guide the AI toward better solutions than exhaustive positive requirements.

Strategic Context Management

Phase-based development

Break projects into phases that fit naturally into a context window:

- Phase 1: Set up the data model

- Phase 2: Build the API endpoints

- Phase 3: Create the frontend components

Each phase should have clear goals and bounded context.

A related principle: avoid asking the AI to work across multiple abstraction layers at once. Asking for “a React component that fetches data from an API and stores it in a database” forces the AI to juggle too many concerns simultaneously. Instead, define your interfaces and types first, then let the AI focus on one layer at a time – the database schema, then the API endpoints, then the frontend components. This mirrors how you’d break down work for a human collaborator and produces cleaner, more modular results.

Be selective about included context

Include:

- Core business logic and custom implementations

- Interface definitions and type signatures

- Project-specific conventions and patterns

Skip:

- Test files (unless you’re debugging tests)

- Build configurations and boilerplate

- Generated code or vendor files

Documentation as persistent memory

Create AI-readable documentation that acts as persistent context across sessions. Most tools have their own conventions – CLAUDE.md for Claude Code, .cursorrules for Cursor – though AGENTS.md is emerging as a cross-tool standard. You might also encounter llms.txt, which serves a similar purpose for websites and documentation sites, helping AI tools understand which pages to prioritize.

Include:

- Project conventions (“We use snake_case for database fields but camelCase in the API”)

- Architectural decisions and their rationale

- Known gotchas and exceptions

- Summary of what’s been built and what’s planned

Before ending a session, ask the AI to summarize progress – this becomes your handoff for the next session.

Here’s what makes this particularly effective: AI is actually good at keeping documentation updated if you make it part of the workflow. When the AI makes changes to your code, ask it to update the relevant documentation at the same time. This turns a traditional weakness – docs that drift out of sync with the code – into a strength. The key is consistency: if you treat documentation updates as part of every task, the AI will maintain context that helps it (and you) in future sessions.

Git commits as checkpoints

Commit frequently to create stable states you can return to if things go wrong. Each commit represents a known-good configuration you can reference or restore. Your commit messages become part of the project’s context for future sessions.

Active Collaboration & Quality Control

Recognize when AI is struggling and when to intervene

Watch for these patterns that indicate confusion:

- Overly complex solutions to simple problems

- Repeating similar attempts with slight variations

- Suddenly changing approach without explanation

- Adding unnecessary abstractions or over-engineering

When you see these signs, pause and either clarify requirements, provide an example, or approach from a different angle. But if the AI is making progress toward the goal – even with an unexpected approach – let it continue.

Review AI output for security issues

AI tools often suggest functional but insecure code. Always check for:

- Missing input validation or sanitization

- SQL queries vulnerable to injection

- Hardcoded credentials or API keys

- Overly permissive CORS settings

- Sensitive data exposed in logs or error messages

Treat AI-generated code with the same security scrutiny as code from a junior developer who hasn’t learned secure coding practices yet.

Let your toolchain be your safety net

Linters, formatters, type checkers, and CI pipelines become more important when working with AI – not less. These deterministic tools catch issues that might slip past both you and the AI during review. A strict TypeScript config, a well-configured ESLint setup, or a comprehensive test suite acts as a contract that AI-generated code must satisfy. Think of it as defense in depth: you review the code, the AI checks its work against tests, and your toolchain catches everything else. If you’re not already using these tools rigorously, adopting AI is a good reason to start.

Master iterative refinement

Don’t expect perfect output on the first try. Learn to guide improvements iteratively. Start with “create a user processing function,” then refine with “add validation,” then “handle missing fields gracefully.” Sometimes it’s faster to make small fixes yourself and feed them back to the AI rather than explaining what’s wrong. The AI learns from your corrections within the session.

Know the consistent blind spots

Certain problems regularly challenge AI tools:

- Date math with time zones

- Complex regular expressions

- Recursive algorithms

- Race conditions and async timing issues

- Platform-specific quirks and browser inconsistencies

For these, either handle them yourself or provide very explicit guidance with examples.

Set clear validation criteria

Write test cases before asking the AI to implement features. This creates a clear contract for what needs to be built. The AI can check its work against tests, and you have confidence the core requirements are met regardless of implementation approach.

Choosing Your Tools Wisely

Match tools to tasks

Each AI tool has its strengths:

- Complex refactoring and multi-file changes need conversational tools with large context windows. Tools like Claude Code or Cursor’s Agent Mode can hold enough context to work across multiple files coherently.

- Rapid autocomplete tools excel at boilerplate and familiar patterns. GitHub Copilot and Supermaven are fast and unobtrusive for this kind of work.

- Chat interfaces work best for exploration and architectural discussions. Claude.ai, ChatGPT, or your IDE’s built-in chat can help you think through approaches before committing to code.

Evaluate cost-effectiveness

Develop a sense for when AI actually saves time:

- Boilerplate and repetitive code: Almost always worth it

- Complex business logic: Often worth it with good tests

- Simple utilities: Often faster to write yourself

- Exploratory debugging: Usually worth the conversation

- Documentation: Huge time saver

- Regex and SQL queries: Helpful but always verify

These skills have made a real difference in how I work. But they’ve left me with a question I suspect I’m not alone in asking: if AI handles more of the implementation, what does our role become?

Part 2: From Implementer to Problem-Solver

The Shifting Landscape

If you’re a recent graduate or early-career developer, you’re entering the field at a complicated moment. Employment for software developers aged 22-25 has declined by nearly 20% from its 2022 peak, while employment for older developers continues to grow. It’s natural to wonder whether AI is making junior roles obsolete. But the reality is more nuanced – the skills that get you hired are shifting, and understanding that shift matters more than ever when you’re just starting out.

The shift isn’t limited to entry-level roles. Research suggests AI’s impact follows a “seniority bias” pattern – it’s changing what employers expect at every experience level. Junior roles are declining because AI can handle many traditional starter tasks, but mid-level positions are evolving too as routine implementation work becomes increasingly automated. The traditional career ladder – simple tickets, then complex features, then system design – is being compressed and rearranged.

What does this mean for the hiring landscape? Employers are looking for different signals. They’re less interested in whether you can implement a standard authentication flow and more interested in whether you understand when to use one. Knowing React or Python syntax has become table stakes – the differentiators are knowing which problems are worth solving in the first place, how to architect solutions that AI tools can help build, when to push back on requirements that don’t make sense, and building the trust that makes teams want to work with you. These are the skills that get you hired now, and they’re learnable regardless of where you are in your career.

The New Core Competencies

Working with AI tools has highlighted certain skills that are becoming even more critical:

System design and architecture

AI agents are great at building components, but they can’t design systems. They don’t understand how different services should communicate, where to put boundaries, or how to plan for scale. These decisions require understanding business context, growth patterns, and technical trade-offs that go far beyond any single coding task.

Trade-off analysis

Every technical decision involves trade-offs: performance vs. maintainability, development speed vs. technical debt, cost vs. scalability. AI can outline the options, but it can’t weigh them against your specific business constraints, team capabilities, or strategic goals. This kind of judgment becomes more valuable as implementation becomes commoditized.

Data modeling and API contracts

These are the blueprints that AI agents follow. A well-designed data model or API contract shapes everything that comes after. AI might suggest a schema, but understanding the domain deeply enough to know what will and won’t work? That still requires human expertise.

Security, compliance, and scalability

AI lacks the context for these critical concerns. It doesn’t know your industry’s regulations, your company’s security policies, or your actual traffic patterns. AI will suggest architectures that would work beautifully… until they hit production load or a security audit.

The Rapid Prototyping Reality

The ability to create working prototypes in hours is changing development, but it brings new challenges too.

”Demo-able” vs. “production-ready”

Building a compelling demo in an afternoon is now possible. The problem? Stakeholders see a polished prototype and assume the project is 90% done. In reality, it might be 20% done. Error handling, edge cases, performance optimization, accessibility, security hardening – all the things that make software actually production-ready still take time.

The POC trap

This pattern is becoming common: build a proof-of-concept in 2 hours with AI assistance, stakeholder loves it, assumes the production version will take 4 hours. In reality, it takes 4 weeks. The speed of initial development creates dangerous expectation mismatches.

Knowing when NOT to prototype

Sometimes thinking beats building. Quickly throwing together a prototype can actually slow things down if it sends the team in the wrong direction or creates false expectations. Recognizing when a whiteboard session or written proposal serves better than functioning code is a valuable skill.

Balancing rapid delivery with long-term maintainability

AI-generated code often works but isn’t always maintainable. It might solve today’s problem while creating tomorrow’s technical debt. Developing a sense for when “good enough” really is good enough, and when to slow down and build something that will last, matters more than ever.

Becoming the Translation Layer

Developers are increasingly becoming translators between different worlds – business and technology, stakeholder vision and technical reality.

Converting vague requirements into testable acceptance criteria

“Make it user-friendly” needs to become “Users can complete checkout in under 3 clicks with clear error messages at each step.” This translation work is crucial – AI needs clear specifications to build the right thing, and stakeholders need to understand what they’re actually asking for.

Distinguishing requirements from implementation details

Stakeholders often mix what they want with how they think it should be built. Separating the actual need from the suggested solution is becoming essential. AI can’t do this filtering – it’ll build exactly what you ask for, even if what you’re asking for isn’t what you actually need.

Managing expectations about AI capabilities

There’s a growing need to explain what AI can and can’t do. Yes, we can prototype that feature in hours. No, that doesn’t mean it’s production-ready. Yes, AI can generate code quickly. No, it can’t make architectural decisions about your specific business constraints.

Strategic meeting participation

Being selective about which meetings actually need developer presence is becoming more important. Status updates? Probably not. Requirements gathering and discovery sessions? Essential. These conversations capture the “why” behind decisions – context that never makes it into tickets but fundamentally shapes what should be built.

Understanding the unwritten constraints

Every organization has them – the politics, the technical debt no one’s allowed to touch yet, the stakeholder who must approve certain changes. AI doesn’t know that the legacy system everyone complains about is still there because it handles edge cases no one’s documented. This institutional knowledge continues to shape every technical decision.

The Irreplaceable Human Layer

Despite all the automation, certain aspects of development remain fundamentally human.

Ethical considerations AI won’t flag

Is this feature potentially addictive? Could this algorithm discriminate against certain users? Will this data collection feel creepy? AI doesn’t raise these concerns – it optimizes for the specifications you give it.

Business context that exists between the lines

Why did we really sunset that feature? What’s the history between these two departments? Why does this stakeholder’s opinion carry extra weight? This context shapes every decision but never appears in documentation.

The “should we?” beyond “can we?”

AI is great at figuring out how to build something. It can’t tell you whether you should build it. Should we add this feature that only 5% of users want? Should we refactor this working but messy code? Should we pivot based on this user feedback? These decisions require human judgment about priorities, resources, and strategy.

Understanding unwritten rules and cultural factors

Every team has its own culture, its own way of working, its own unwritten rules. AI doesn’t know that your team values documentation over test coverage, or that your company prioritizes shipping fast over perfect code. These cultural factors determine what solutions actually work in practice.

If individual developers need to evolve this much, imagine what happens to entire teams and organizations. The changes ahead may be even more fundamental.

Part 3: How Teams Will Transform

The Shifting Team Dynamics

The trend is becoming clear: teams are likely to achieve more with fewer people. Not necessarily the “2-3 developers” that some futurists predict, but meaningfully smaller ratios. One developer with AI agents may be able to handle what previously required a small team. The multiplier effect will vary – simple CRUD applications might see 10x productivity gains, while complex systems might see 2x – but the direction seems consistent.

This shift may create new roles and responsibilities we haven’t fully anticipated yet. We could see the rise of “AI output auditors” who verify generated code meets standards. Pair programming might evolve to include AI as a third collaborator. Code reviews may shift from “is this correct?” to “should AI have generated this?”

Some startups are already experimenting with this model – small teams attempting to produce enterprise-level outputs. Whether this proves sustainable or whether they’re accumulating hidden technical debt remains to be seen.

Rethinking Planning Cycles

Many enterprises still operate on quarterly planning cycles with multi-day sessions to align teams and set delivery dates. This approach is becoming increasingly disconnected from reality. When AI can prototype solutions in hours, 3-month commitments can become obsolete before the planning session even ends.

The shift may be unavoidable: from big-batch planning to continuous discovery with weekly pivots. Teams that cling to synchronized “release trains” risk being lapped by those shipping daily.

Top-heavy frameworks that require multiple layers of approval struggle to match AI’s iteration speed. Dependency management boards become less relevant when AI can rapidly bridge integration gaps. The emerging model: small autonomous teams operating within strategic guardrails rather than detailed roadmaps. Think less “here’s exactly what to build” and more “here’s the problem to solve and the constraints to work within.”

When Story Points Stop Working

Traditional estimation is getting harder. Story points assume predictable effort-to-output ratios, but AI breaks this completely. A “5-point story” could take 5 minutes with AI assistance or 5 days of careful oversight. The same task might be trivial with the right AI tool or impossible if the AI hits a blind spot.

Some teams may start to abandon points for outcome-based metrics: experiments run, hypotheses tested, value delivered. The question shifts from “How many features did we ship?” to “How many ideas did we validate?”

When building becomes cheap but understanding remains expensive, speed of learning matters most. “Failed fast” becomes a success metric when experiments cost almost nothing. Teams that optimize for time-to-insight rather than time-to-delivery may have the advantage.

The Documentation and Knowledge Crisis

Here’s an emerging problem: AI generates code faster than humans can document intent. A developer with AI assistance might produce hundreds of lines of code in an hour, but the business logic and decision rationale behind that code? That’s not being captured anywhere.

The “why” behind code is becoming more important than the “how” – yet we’re generating more “how” than ever before. This gap between code generation and intent documentation could become a serious liability. When institutional knowledge shapes technical decisions but code is generated so quickly that reasoning is never captured, organizations risk losing what makes them unique.

We’re potentially heading toward a new form of technical debt: code that works but that no one fully understands. Not because it’s badly written, but because it was generated so quickly that the reasoning was never documented. The urgent need for AI guardrails and audit trails isn’t just about compliance – it’s about maintaining comprehensibility in rapidly evolving codebases.

Preparing for This Future

For teams looking to adapt, start small. Use AI tools for code reviews or test generation. Build comfort with the technology before betting everything on it.

Documentation strategies need to evolve. If we’re generating code faster, we need to be even more disciplined about documenting the “why” – the business decisions, the trade-offs considered, the constraints that shaped the solution. AI can’t capture this context.

Establish AI governance before it becomes critical. Not heavy-handed policies, but lightweight guidelines about code review standards, security checks, and accountability chains.

Training should be universal, not just for early adopters. Teams that only enable their “innovation champions” while leaving everyone else behind will create more problems than they solve.

And be honest about measurement. Track what AI actually improves and what it doesn’t. Not everything will be better with AI, and pretending otherwise helps no one.

The Uneven Road Ahead

The adoption of these changes won’t be uniform. Startups are running full-speed with AI agents while enterprises move cautiously, and for good reason. Regulated industries – healthcare, finance, government – will require human oversight for years to come. Geographic disparities in AI tool access will create different speeds of evolution.

Some teams will thrive with these changes. Others will struggle. The differentiator might not be technical skill but adaptability and willingness to evolve.

We’re not heading toward a utopia of 10x productivity or a dystopia of mass unemployment. We’re entering a messy middle where some teams soar while others struggle, where new problems replace old ones, and where human judgment becomes more valuable precisely because there’s more automation.

The teams that thrive may not be those who adopt AI fastest, but those who thoughtfully integrate it while preserving what makes them unique. The human elements – creativity, judgment, institutional knowledge, and the ability to ask “should we?” – become more valuable, not less, in an AI-augmented environment.

These changes won’t happen overnight, and they won’t happen uniformly. But for those willing to adapt, there’s reason for optimism.

Conclusion: No Magic Button

We started with that meme – the laptop with a giant red button labeled “Make Music.” Anyone who’s actually worked with electronic music knows how far this is from reality. The layers of complexity, the technical knowledge, the creative decisions that go into every track – none of that fits on a button.

The term “vibe-coding” carries that same oversimplification. It suggests we’re just casually prompting our way through development, letting machines do the real work while we vibe. But having spent real time with these tools, I can say the reality is far more interesting and complex.

Like the electronic musician who combines synthesizers, samples, and effects to create something entirely new, developers working with AI agents are learning to conduct intelligent systems. We’re not being replaced – we’re being amplified. But that amplification only works if we evolve our skills from coding to orchestrating.

The skills gap isn’t about learning to write better prompts. It’s about becoming fluent in a new form of development where human creativity, judgment, and strategic thinking matter more than ever. Where understanding business problems deeply matters more than syntax. Where knowing when NOT to use AI is as important as knowing how to use it.

Your path forward will be unique to your context. Start where you are. Experiment with AI tools on small, low-risk projects. Document what works and what doesn’t. Build your intuition for when AI helps and when it hinders. Most importantly, don’t see this as a threat to defend against but as a capability to integrate thoughtfully.

There is no magic button. There never was. Just developers learning to work in new ways, one experiment at a time.

And honestly? That’s more interesting anyway.